I have 2 projects floating around in my head, and 1 deployed but incomplete.

I’m on NYE break.. another 5 days or so of vacation. This would be a perfect time to work on these projects. If that is what I want to do.

Yet, I find I am reluctant to start. I get more enjoyment out of thinking what I could do, rather than actually doing it?

Its probably similar to the idea of not-running while on vacation. If I’m going to do it, it needs to be part of normal-life, I think, not trying to beat it into submission with the vacation-card.

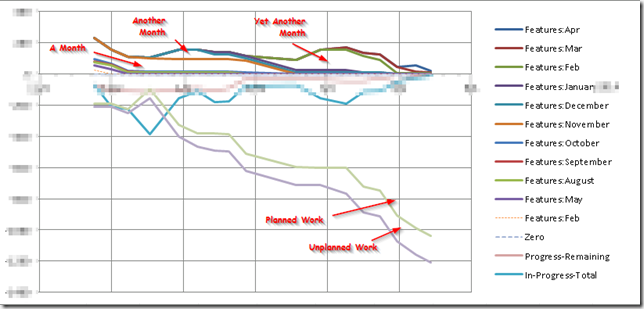

Idea #1 – “Burndown”

I’ve had this for several years. It’s a cross between smartsheet, and Rally, and “always remembering historical scope and left” to generate burndown graphs for any node in the tree. Its meant to be a multi-person hierarchical task-for-a-project list. In advanced mode, with templates and stuff.

The trick to make this one work is to have the UX be as easy to get things done as possible.

Idea #2 – “Muddy Sky”

Except, I want it so that I could write a multiplayer version of Endless Sky or even Elite, but with Mud-like conventions on the server side .. your spaceship is “floating” within a “room”, and interacts with the other objects in that room, and all the client/server stuff is handled over Signal/R, probably using javascript fro the client.

The solution in my head currently consists of:

- javascript Canvas on the client side, with translation for rotating ship graphics

- signal/r for payload transfer from server to client

- property transfer dictionary of object / key / value for transfering game state from server to client

- the “player” object on the server side remembers what was sent before.

- the player object on the server gets called to collect what it thinks its client is interested in

- some code to compare that against what was sent before so that only changed values get sent down in a client update

- different kinds of messaging

- “the world has changed” server->client (described above) to provide client with the latest that it needs for rendering

- “event” server->client – this would be stuff like “something blew up”, or “somebody said something”.

- on the server side, would broadcast to a room or to a particular player object

- “command” client->server – this is stuff like thrust, turn left, turn right, etc.

- I can already tell that will likely need to do “thrust for 1 sec” etc because I’m not sure how time sync would work.

Challenges:

- latency – I don’t know that signal/r will be fast enough to get the latest updates from server down to client. I’m also not sure that the code for reducing payload size is worth it. And I haven’t written any code yet!!!!

- time tracking – client has to deal with different FPS, server has to deal with different tick-per-sec.

- I was thinking that all commands / events etc are stuff like “at tick XXXXX, thrust at 50% for 3 ticks”

- server deals with things in desired-tick order, possibly one tick at a time (or set server interval. 100ms?)

- start off with fixed time intervals, and then auto-tune from there

- auto-tune: how fast can the client update its screen – no need to get updates from the server any faster than that. Let the server know that.

- auto-tune: how much has changed, does the server need to send an update to this client yet? Note that stuff that changes, can stay in the buffer to be sent, doesn’t have to be re-detected.

Gameplay challenges:

- I want it to be like endless sky…

- It’s a 2D world

- things overlap each other, (mostly) no collision

- basic operations are thrust, rotate

- there are ship computers for dealing with more complicated things

- except that, kindof in order:

- no speed limit. thrust one way, thrust the other way

- this is why you want an AI to guide you to someplace

- don’t fly over a star, you’ll overheat and explode

- there COULD gravity

- I’ll suspend belief, and have the planets/sun/etc not do the gravity simulation bit, but I want the ships and torpedos to be affected by gravity.

- However, then there’s a discrepancy .. so maybe planets do orbit via gravity.

- There would have to be something like “I want a planet of mass M=x to orbit that star with a Aphelion,Perihilion=y,z” and it calculates the starting x,y and velocity or something. And then just calculate where the planet will be at a given time T and what its velocity will be.

- If we go with Gravity, then there will be bigger distances between planets

- HYPERSPACE is a different space that your ship can enter

- masses exert anti-gravity. Ie, you get pushed away from planets, suns, etc. r^3 instead of r^2?

- this will probably cause some interesting “focus” points where everything gets pushed towards.

- I wonder how those focus points would move as things orbit each other in normal space?

- I want velocity in hyperspace to be exponential, like it is in FSD in Elite. Ie, you control your acceleration up/down. You can then get to insane levels of acceleration .. in a certain direction.

- You will really need a computer to guide you in this world.

- “Interdiction” ala Elite would be done by firing interdiction missiles through hyperspace at your target.. possibly homing?

See, there are so many places I want this to go. Hence, I want a nice development system, in C#, similar to how we used to run LP-Mud’s.. where I can play with these different mechanics (server side).. and provide all the hooks that others might need to make the game interesting.

Based on the amount I’ve typed, I know that the game is the one I want to work on the most.

But I also know that vacation time is not enough to get this to where I want it to be. Sure I might get some stuff knocked out, but it won’t be the juicy stuff. So, I’m loathe to start.

Maybe I’ll go mine some more asteroids in Elite.

Or maybe I’ll set up mini-simulations of what the game play would be like, and just do that rather than build the game.

Maybe I need to start visiting the game-development groups in Louisville on a more regular basis.

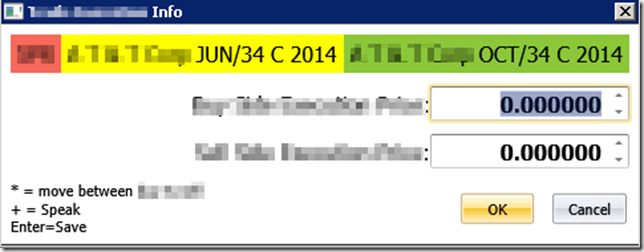

I recently upgrade my mom’s store to use

I recently upgrade my mom’s store to use  I had to call them a few times. Freaking awesome – they people I talked to, not just some help desk lackey. These were highly competent technical people (I can tell the difference). At the first sign of miscommunication, they immediately went into “let me remote in and look at your screen” mode and took it from there.

I had to call them a few times. Freaking awesome – they people I talked to, not just some help desk lackey. These were highly competent technical people (I can tell the difference). At the first sign of miscommunication, they immediately went into “let me remote in and look at your screen” mode and took it from there.

I haven’t posted much code stuff lately. Nothing in particular seems blog-worthy, but hey! after CodePaLousa, I’m making an effort to write about what might be mundane.. in a relatively anonymous way.

I haven’t posted much code stuff lately. Nothing in particular seems blog-worthy, but hey! after CodePaLousa, I’m making an effort to write about what might be mundane.. in a relatively anonymous way.