I feel stalled in my project. It might be that I need to write about the important stuff done so far before I continue to redo/optimize the game; setting the stage to understand the need for some of those optimizations.

I feel stalled in my project. It might be that I need to write about the important stuff done so far before I continue to redo/optimize the game; setting the stage to understand the need for some of those optimizations.

Reference code for this post (tag): https://github.com/sunnywiz/dotnetmud2015/tree/blog20160302.

The playable game (which might be updated, not frozen to this post) is: http://dotnetmud.azurewebsites.net/Home/SpaceClient

Game state at this time: Multiplayer, can shoot missiles, missiles hit things, score is kept.

There are 3 interacting timing loops:

Client / Server Update Loop

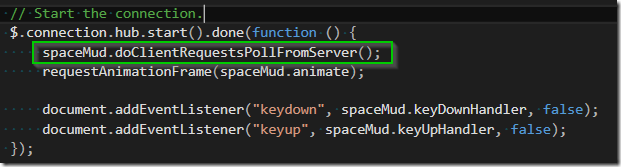

I made the client “in charge” of this. Starting in Javascript, the client requests an update from the server:

Along with the request for game data, the client is also sending the state of controls for the user. I went with “how many ms has the thrust key been pressed” as my input – there’s a separate set of handlers which watch key-up and key-down events and long how long keys have been pressed for.

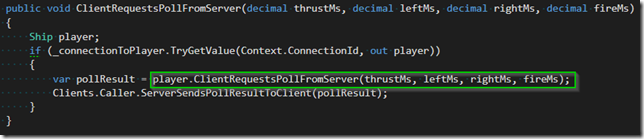

This then travels up through Signal/R, and shows up at the server 20-50ms (an eternity) later:

The SIgnal/R side of the hub takes the connection, looks up which server-game-object that connection is tied to, and asks that game object (which happens to be a Ship.cs) to craft what it thinks the world looks like.

The Ship has several things it has to do.

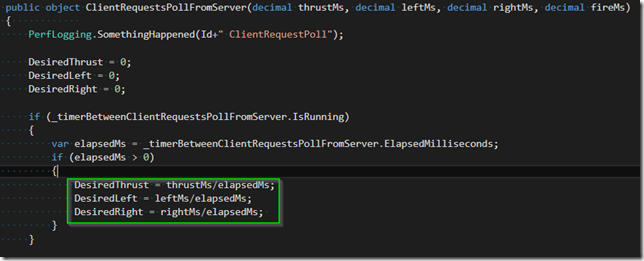

First is to look at the keypresses and turn those into thrust amounts. I went with a % of thrust:

There is similar code for “can I fire another missile yet” (not pictured).

Then, it makes a copy of all the stuff that the client needs to draw the world:

It records itself, all the other 2D objects in space, some scores, and what the current “Server Time” is (and rate of change of server time, which is a YAGNIY for when the server gets overloaded and has to go into bullet time).

It packages all that into a return (of type object, which gets JSON-serialized), and SpaceHub happily sends it back to the client:

Then, 25-50ms later (another eternity), the client receives the game-update:

- Green – the client updates what it knows about what’s going on on the server. I tried to keep this in its own “object space”, to indicate that the server is the authority here.

- Blue – the client updates various things that are of interest to the client

- Purple – there’s some bulk copying going on that isn’t very sexy and is very network-inefficient.

- Red – and the client immediately requests another packet.

Places to Improve: Network Traffic.

The server sends ALL the data, ALL the time. A network packet looks like this (raw, pretty printed):

|

|

There is a LOT of stuff in there that is needed once (like image URL’s), and then does not change. Also, many property names, while nice for humans, is not optimal for network traffic (words like “ClientREquestsPollFromServer”) for example.

“No Big Deal?” — Doing a test, holding down the fire button and shooting the planet, for a few minutes, in Azure Data:

Doing some math:

If I have a successful game, and there’s about 1 combat going all the time, we’re looking at $18 per month.

The solution for this is to only send diffs across the network. That’s on my list for pretty soon. But it will require some hand-crafting detail. And some before/after testing. This will be its own blog post.

Places to Improve: Network Lag

This model is similar to how Kermit used to work – there is a LOT of dead time between updates.

One way to improve this might be to have the client keep track of the lag between server updates, and then, in its request to the server, ask “please give me additional packets at the following times, until you hear from me again”. So, the server could send 2 game-updates for every 1 client-inputs-update. Its on my list, sometime after sending diffs. Have to be careful and mark the intermediate frames as intermediate, so that only the major frames cause the cycle to continue.

Another direction we could go is completely decouple the client-input from the server-response. I’m not going to do that, because I like how the client has to constantly remind the server of its existence – if the client goes haywire, I don’t need to write additional server code to detect that and shut down the server sending stuff to the client. I’m also lazy. ![]()

In Conclusion

So, there’s the ugliest timing loop in the game so far. The other two are relatively easy, may not get written about soon.