Story #1: Performance Improvement

Problem: I had 5 people, all doing the same web service call(s), which were slow – sometimes up to 20, 30 seconds to complete. Except that the system was taking 2 minutes to bring the information back.

Diagnosis: Ants Performance Profiler showed some interesting stuff.

- Initial call to get the list of A1,B2,C1,D2 … took 5 seconds

- But a background thread to populate A1a, B2a, C1a, D2a .. took another 30 seconds

- But there’s a thing, which for any given A, needed the sum of A1a, A2a, A3a even if A2 and A3 were not in the initial result set. THAT one .. for large screens.. going at a speed of about 6 per second.. took up to 2 minutes.

Solution:

- Introduce a service that preemptively did the web service calls.. cached results for up to 5 minutes. Service runs every 4.5 minutes. Everybody became much faster, and 5 minutes did not affect their business decisions. Instead of 2-20 seconds, now those calls finished in 7ms .. IF the cache was filled. Which the service ensures that it is. If the service is down, any client can fill the cache as well – they all use the same class.

- Removed the background thread that got all the extra A stuff… waited until the user clicked a little expando button that showed that information. THEN go and get it.

Problem introduced by the solution:

- Now, if you click “Expand All” on a large screen, you get 200 threads all trying to expand at the same time. It succeeds, but it also thrashes while attempting to resolve. However, that’s a <1% use case, so we can live with that.

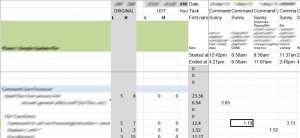

If my client is okay with it, I’d love to post some before and after graphs to show how things changed.

Story #2: Idle Crash

Not on mine or the product manager’s machine, but certain machines on the floor, while using the app – leaving it sitting in the background – it would crash.

Diagnosis:

- Event log entry #1: .Net blew up, Yo.

- Event log entry #2: System.AggregateException()

Research:

- in .Net 4.0, if a Task.Factory.StartNew(()=>{}) background thread generates an exception, and nobody reads the exception, … nothing happens.

- Until the GC runs. Then the app blows up.

- Doesn’t happen in .Net 4.5, which is why my machine ran fine.

Solution:

- It was blowing up in the code that I changed in Story #1.

- Added a handler for UnobservedTaskException – to log any other things that might happen with a full stack trace so they could be debugged.

- It hasn’t shown back since.

Story #3: Iterating on Requirements

Problem:

While working on my normal client, I have another client for whom I (and another guy) are estimating a project. However, this is a large client, and the scope of the project seemed.. fuzzy. Huge. Integrated with a bunch of systems that had not yet been written.

Solution:

- Break the project up into Phases. The client came up with the same idea! Yes!

- Make the first Phase the smallest it could be. Thus, success on that phase would also clear up architecture problems, and provide a target for other systems to code against. It would also not be dependent on any other system to come online first.

- Collect everything into a single document:

- Questions

- Decisions

- Design

- Features

Almost Everything started off as questions.

As stuff gets clarified, it moves over to “Decisions”.

“Design” is where larger things (like schemas, interfaces, etc) can be added.

“Features” was an interesting idea:

- It is a one-line-at a time, “if the system has all of these things, then it is complete” list, bulleted and indented.

- When you first start working with the project, you won’t know what most of that stuff is.

- But after you’ve caught up to the “groupthink” on the project, you Will. It’s a checklist, without content.

- This is the only part of the document that needs to be “complete”; everything else is just talking points, things in flux.

- This is what the estimate actually gets built on.

I can’t say this is my idea. I picked it up from a speaker who talked about his daily process.

So, yep, that’s my software engineering excitement of probably the last 3-4 weeks. If I were asking questions on this blog, I’d ask, what have you been up to?