It was a good experience.

I had my first Tesla Mobile Service Appointment!

Details of the appointment – later. More important is various topics I asked the person about. All of this is my memory, which is faulty, and to cover Teslaperson, I’ll even say my memory can be fillintheblankious.

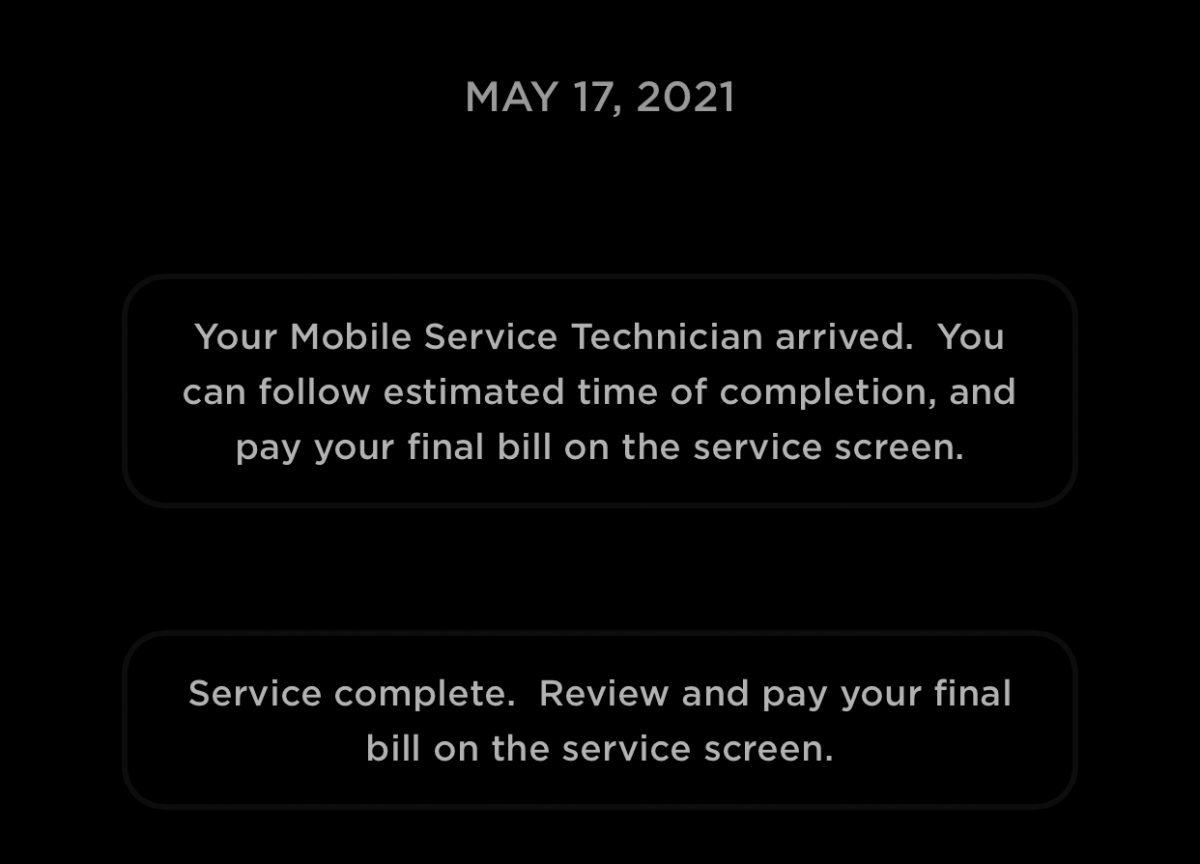

Messaging – they switched to the Tesla app for messaging now apparently they did text messages before. Many people don’t have notifications turned on, and thus a disconnect.

Service Center in Louisville – not yet, but the numbers are definitely where they need to be to have one. The southern Indian lease thing didn’t happen, but they have a line on another place, but it will be next year before it opens due to current tenant exit and remodel. Currently 3 people working the Louisville market, all local.

Throughput / Backlog – Currently running about 3-4 days out for mobile service in Louisville. Don’t have a lot of Model Y parts in stock yet. If parts need to be ordered, they try to do it within 1-2 days of ticket open, and it takes up to 7 days to get the parts in.

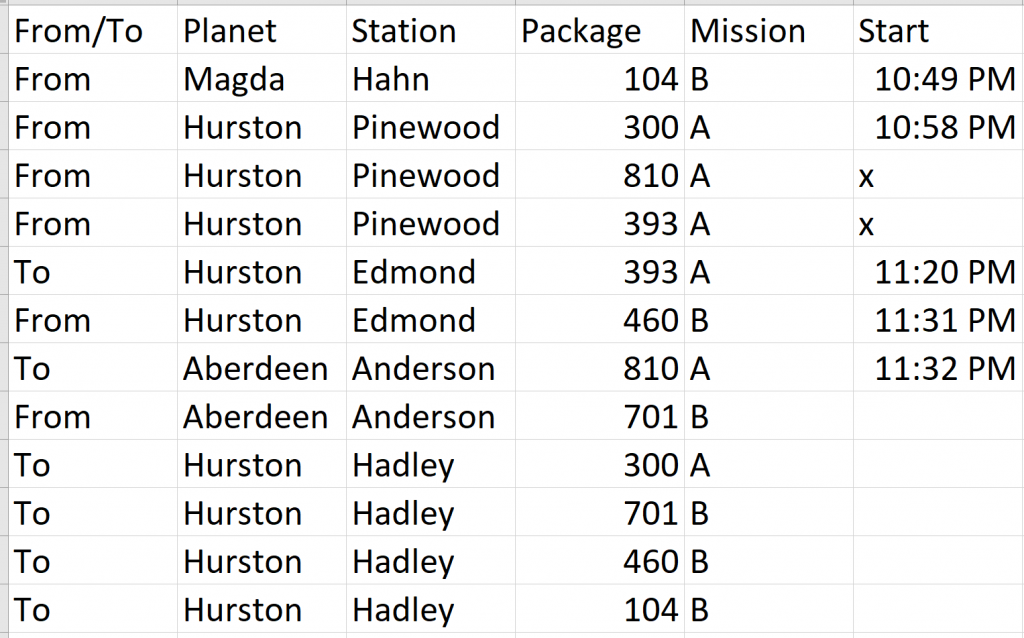

Service Area – because in Louisville, both my work Blankenbaker and Home Pewee Valley are covered. They are out at Blankenbaker all the time.

Third party apps like ABRP etc – they’ve had a 3rd party app that tried to inject firmware code into the card, so no, not a good idea. If you have one that’s working and it doesn’t keep your car “alive” by pinging it all the time, yay for you.

Wipers – yes they replace wipers, its $__, but if you want you can order the wipers directly and watch the tutorial video on how to change it yourself

Rotate Tires – yes they can do that. $__. Faster/easier for one to take to (your preferred tire place)

Cabin Filter – yes they can do that. $__. Around here, once every year or two?

Recommended Service – 2 years flush brake fluid, 6 years flush coolant. You will not get a reminder, its in the service manual. Brake fluid is hydro-absorb-moisture-ic, so it gets funky over time.

Phone Charging Cables working or not working – Rear Seat USB-C Port Authentic Cable Only because Power Only; Front Seat USB-C Port, because Data and Power, most Cables Work. Power turns off when car goes to sleep.

If Console has problems, unplug Sentry stick and format that on a PC. Unpair phones, delete contacts. Fancy Emoji characters can cause problem.

Funky Color on Trim – Its the soap that’s being used (I go to a car wash). I found that if I applied a bit of moisture and rubbed my thumb over it, it comes right off.

Specific repair – Passenger Seat, one of the controls wasn’t working. Turns out the cable going into the servo motor was not plugged in all the way. Estimated cost: $107. Actual cost: $0. They also filled up the air in my tires and removed a piece of packing plastic that came with the car. The appt was scheduled 7:30-12:30, they showed up about 9am.

It was a good experience.