I’ve previously talked about unit tests VS integration tests and various database-inclusive testing strategies. I have recently been dealing with some pain on making sure everything needed for some code is moved between environments – a process fraught with human intervention and forgotten steps and mistakes and ambiguity. In an IM conversation with one really smart guy about this (Hi Josh!), an Idea was born.

Preliminary to the Idea

We’ve gotten pretty good at getting code between environments. There’s all kinds of things in source control for branching, and merging. There’s different flavors of branching, and different ways to package together code to send it places, my personal favorite is using a build server to do the actual deployment (thus documenting it).

However, every customer I’ve worked with, struggles with how to get [the right] database changes into an environment. None of them think they struggle with it, but they all do. (well, 3 of the last 4). A lot more than developers struggle with getting code moved around. The DBA’s are usually saying “all you have to do is X”, and the developers are saying “you want me to do WHAT?”, and they meet somewhere in the middle.

The standard idealist’s view of this world is: check your database into source control. The tricky bit becomes: using which solution? Visual Studio SQL Server Database Projects? Redgate SQL Source Control? E/R Studio Repository? RoundhousE? I have not yet met a client who does this; usually there is some really weird way that the description of what needs to change gets handed to the DBA, who then takes the changes the rest of the way.

To broaden the scope, the kinds of assets that I’ve seen companies struggle with:

- Database schema changes

- Database data changes

- Configuration entries per environment

- The right list of components being deployed per environment

- All of the above when adding a new environment (essential for feature branching)

Target Audience

Folks working at small companies, where they usually have full access to the production server, might scoff at this; sorry guys you are not my current target audience.

The target audience is folks working at large enterprise companies, that host their own software — usually those that have Sarbanes-Oxley compliance that states: NO DEVELOPER CAN TOUCH THE PROD BOXES, EVER. [sidebar: maybe it doesn’t actually say that, but it’s the excuse given every time]. It’s a black box.

The target audience is also NOT people writing sellable software, where hopefully 500 customers will run an install 500 times – in those environments, there’s usually a chunk of resource per release to get the installers right. And a lot of VM’s to run installs on. Over and over.

Personal peeve: I ended up trying to communicate with other folks on what needs to happen, using a very limited vocabulary, often with edge cases that don’t fit their language. Sometimes, the chasm between our experiences was even larger, for example, in production they had a web farm behind a certain kind of load balancer with a firewall, and in lower environments where I had access, they didn’t (too expensive, not in the the department’s budget). But I certainly was not allowed to log in to those things to get a “feel” for them, and how to work with them; yet I was expected to to tell them exactly what they needed to get it running. Personal solution: Cookies. Shared.

The Birth of the Idea:

At the previously salaried job, I was in charge of adding some stuff to a website so that it could communicate to a web service. This web service was in charge of taking uploaded documents and processing them further, then depositing the results in a shared folder. I added a Test<ReleaseName>.aspx page to that website, where it talked to the TestWebService.asmx page that sat next to the normal web service, and they talked about stuff like:

- What identity was the service running under?

- Did the service have read/write/delete access to the drop folder?

- could the service transfer a LAAAARGE file without dying over the network?

Within the website and the web service, I made sure to limit the request so that it would only “do work” if the sender was from the developer’s subnet, specifically excluding the subnet that the internet came in on (after they got through the firewall). Everything not recognized was immediately 404’ed.

The result was I could walk over, hang out with the build/deploy guy (without touching his keyboard and breaking compliance), and have him browse to the test website – and it told him everything that was going on, especially what was broken. We spent a LONG time trying to get the identity to run as the right person with the right access to the right folders, and if I hadn’t built the test pages, it could have gotten really frustrating. (Rather than BUILDGUY=>”no”=>DEVELOPER=>”try”=>PERMISSIONSGUYS=>”done”=>DEVELOPER=>”again?”=>BUILDGUY, it was [DEVELOPER+BUILDGUY]=>”try”=>PERMISSIONSGUYS=>”done”=>[DEVELOPER+BUILDGUY]=>”yay!”)

The Idea: Create Tests

Here’s a sample of the kinds of tests that I’m talking about:

- Can I communicate to W1 web service correctly?

- Does table T1 exist?

- Does table T2 have an entry for E1,E2?

- Does table T3 have a column C1?

- Is there any data that matches this known pattern SQL1 which should no longer exist?

- Does Table T4 have an index I1?

- Does stored procedure SP1 exist?

- Does stored procedure SP1 have xyz in its definition? (this could get fancier in your environment if you have stored procedure revision numbers; mostly trying to ensure that a stored procedure got updated)

- Does any stored procedure have any references to XXX in their definition? (when dealing with the case where many stored procedures need to be modified because a table is going away)

- Do at least N stored procedures have any references to XXX in their definition? (when dealing with multiple stored procedures being updated because something required got added to a table)

- Can I read the configuration entry for E1?

- Do I have {x/y/z} permission to network path N1?

The Proposal

- To add a test/configuration channel to every component of what assets are required for that component to function

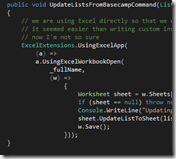

- The structure could be like this (C# psuedo-code, written in notepad:)

public class Requirement {

public string Name { get; set; } // to help us all talk about the same requirement

public string ReleaseName { get; set; } // in which release was this introduced

public DateTime CreationDate { get; set; } // when was the requirement created?

public string Description { get; set; } // the "why" of the test, documentation of the asset needed or what role it plays

}

public class RequirementResult {

public Requirement Requirement { get; set; } // which requirement are we talking about?

public bool? Success {get; set; } // was it successful? null = don't know

public string Result { get; set; } // the result of the test, what's broken, or what worked

public IEnumerable ChildResults { get; set; } // sub-component results

}

If a component depends on a child component, have it be able to query the child component’s test channel (example: web service calls to a web service in our control).

To ensure that this test/configuration channel is locked down by a simple, shared piece of code that determines if the test/configuration channel can run (on which the enterprise can sign off as a whole), and provides the structure of the test results.

Summary

I think it would be pretty useful.

It would get even more useful over time – with the system getting more documented with each additional release. This is the kind of detailed documentation many folks would drool over – much more useful than the documentation provided by writing unit tests, which in my experience, only 10% of developers think are useful.

I think it would help all the folks involved – developers, DBA’s, Network guys, Permissions guys, Deployment guys – talk the same language and have the same set of tools to determine if the work was “done”. Rather than being at odds with each other: “you need to communicate with me in MY language!”

The next project I get to start from scratch, I’m going to try this.

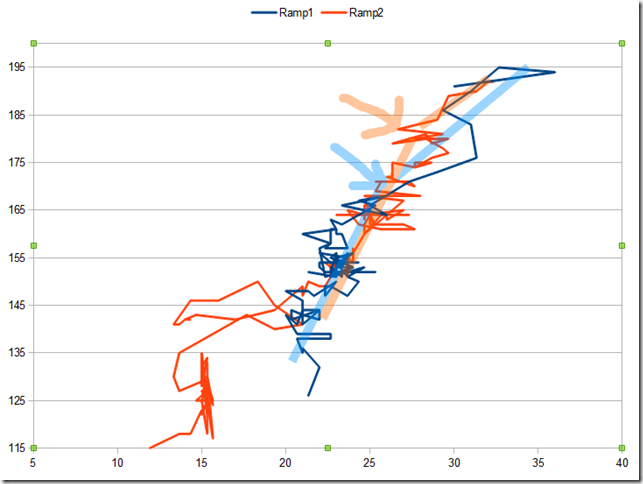

Here’s a slightly different view, courtesy of the free-compare tool at

Here’s a slightly different view, courtesy of the free-compare tool at